Quick Facts

- Category: Science & Space

- Published: 2026-05-12 19:24:15

- Steam Controller Gets First Phone Mount: Basegrip Drops Monday

- How to Deploy GPT-5.5 in Microsoft Foundry for Enterprise AI Agents

- Google’s Workspace Icon Redesign Sparks Broader App Revamp: Exclusive Report

- The Humanoid Speed Revolution: A Guide to Engineering Record-Breaking Sprinters

- Linux 'sos' Command Emerges as a Rapid Diagnostic Powerhouse: 53 Seconds to Full System Snapshot

Overview

Dark energy remains one of the most profound mysteries in cosmology. It accounts for about 70% of the universe's energy density, yet its nature is unknown. A new approach combining artificial intelligence (AI) with data from the upcoming Vera C. Rubin Observatory aims to solve this puzzle by refining the use of Type 1a supernovae as "standard candles." This guide explains how scientists are rethinking these cosmic explosions to hunt for "unknown unknowns"—missing ingredients in our current model of the universe. By the end, you'll understand the methodology, prerequisites for participating, and common pitfalls.

Prerequisites

Technical Background

- Basic knowledge of cosmology: expansion of the universe, Hubble's law, and the concept of dark energy.

- Familiarity with Type 1a supernovae: what they are, how they form, and why they are used as standard candles.

- Understanding of machine learning concepts: supervised vs unsupervised learning, neural networks, and feature engineering.

- Programming skills in Python (preferred) for data analysis; familiarity with libraries like TensorFlow, PyTorch, or scikit-learn.

Equipment and Software

- Access to a computer with at least 16 GB RAM for processing simulated Rubin Observatory datasets.

- Installed software: Python 3.8+, Jupyter Notebook, and relevant ML libraries.

- Optional: GPU for training deep learning models on large supernova light curves.

Data Access

- Rubin Observatory will release simulated survey data via the Data Preview 0 (DP0) program. Familiarize yourself with the Rubin science data management system.

- Existing supernova datasets (e.g., from the Dark Energy Survey or Pan-STARRS) for training and validation.

Step-by-Step Guide to Using AI and Rubin Data for Dark Energy Studies

Step 1: Understand the Role of Type 1a Supernovae as Standard Candles

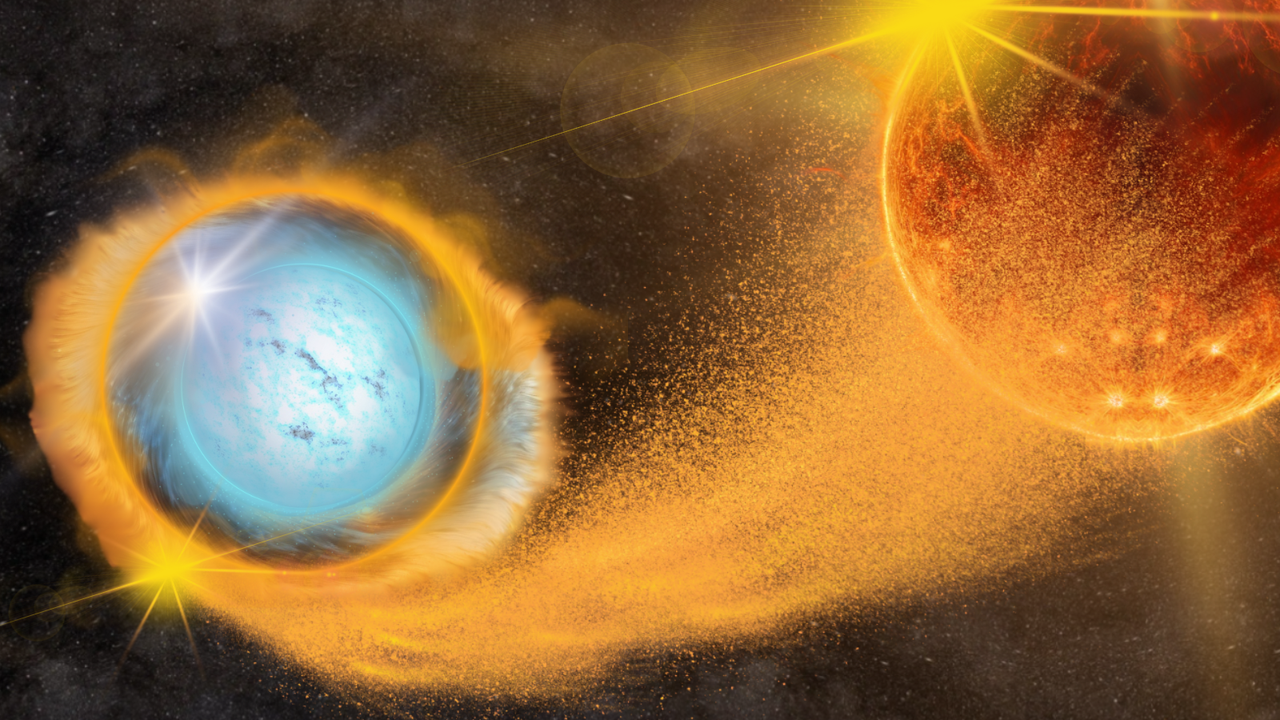

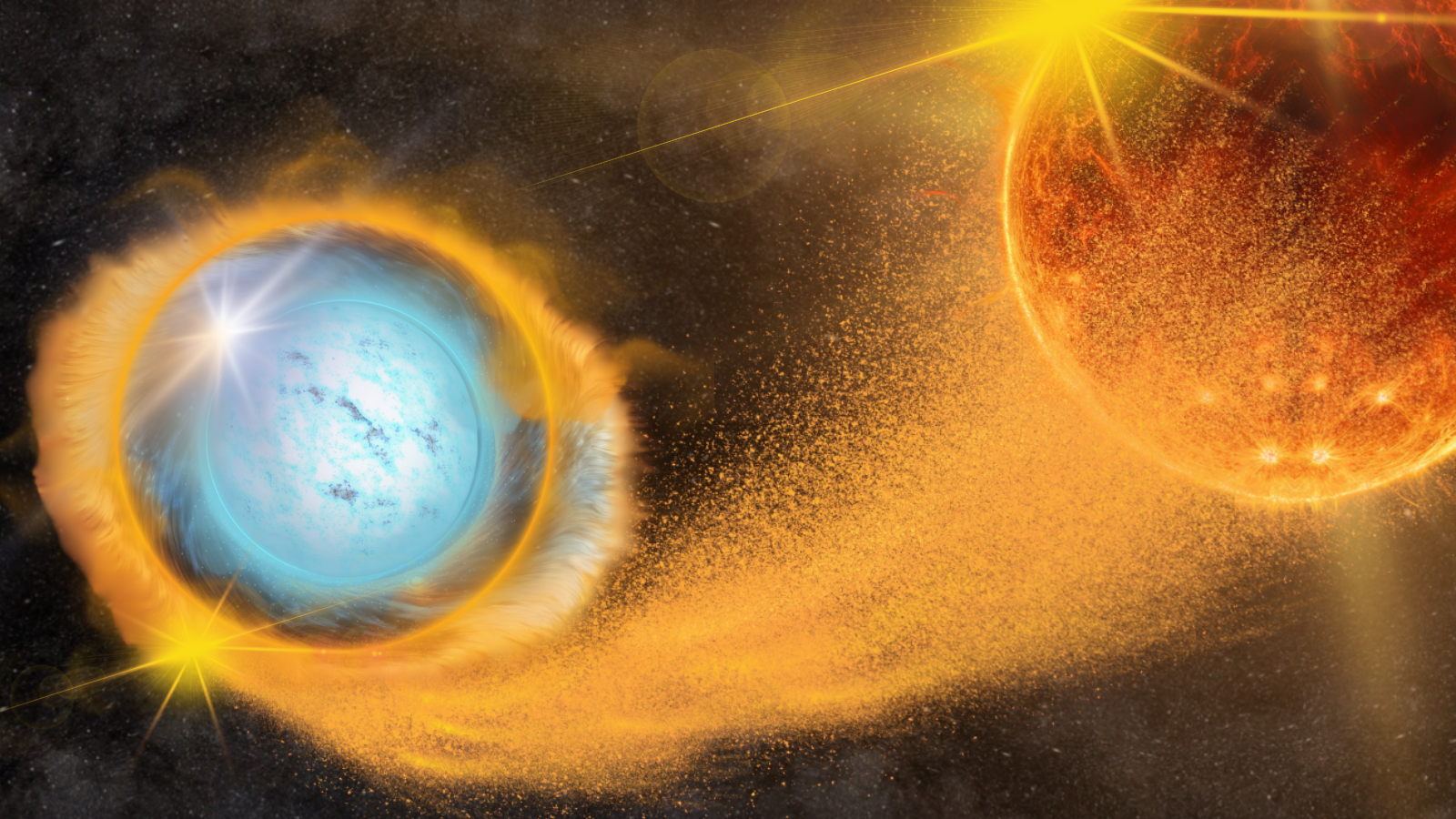

Type 1a supernovae originate from white dwarfs in binary systems that accrete matter until they reach the Chandrasekhar limit and explode. Their peak absolute brightness is remarkably consistent, allowing astronomers to measure cosmic distances by comparing observed brightness to known intrinsic luminosity. This distance measurement, combined with redshift, reveals the expansion history of the universe—and thus the influence of dark energy. However, systematic uncertainties arise from factors like dust extinction, intrinsic brightness variations, and unknown evolutionary effects. The Rubin Observatory will detect millions of such supernovae, providing an unprecedented sample to reduce these uncertainties—but only if we can properly model the data.

Step 2: Leverage AI to Handle Massive Data Volumes

The Rubin Observatory's Legacy Survey of Space and Time (LSST) will produce 20 terabytes of data per night. Traditional manual analysis is impossible. AI algorithms, particularly deep learning, can automatically classify supernovae, reject contaminants (like variable stars or active galactic nuclei), and estimate distances directly from light curves without relying on human-specified features. The key is to train models on simulated data that incorporate known physical effects, then apply them to real observations. This step involves:

- Data simulation: Use the Rubin's simulation framework to generate synthetic supernova light curves with realistic noise, cadence, and observational effects.

- Feature extraction: Rather than hand-crafted features (e.g., stretch factor, color), let a convolutional neural network learn patterns from the time-series flux measurements.

- Distance estimation: Train a regression model that directly outputs luminosity distance from a light curve, bypassing the traditional Phillips relation.

For example, a simple LSTM network can take a sequence of flux values in multiple filters (ugrizy) and predict a standardized distance modulus. Code snippet (Python using PyTorch):

import torch.nn as nn

class SupernovaDistanceNet(nn.Module):

def __init__(self, input_size=6, hidden_size=128):

super(SupernovaDistanceNet, self).__init__()

self.lstm = nn.LSTM(input_size, hidden_size, batch_first=True)

self.fc = nn.Linear(hidden_size, 1)

def forward(self, x):

out, _ = self.lstm(x)

out = out[:, -1, :] # take last time step

return self.fc(out)Train on simulated data with known distances, then test on hold-out sets to quantify bias.

Step 3: Hunt for Unknown Unknowns

Even with perfect AI models, dark energy inferences may be biased by unknown phenomena that alter supernova properties over cosmic time. These "unknown unknowns" could be changes in progenitor populations, metallicity effects, or dust properties. To find them, scientists will use unsupervised learning—clustering and anomaly detection—to identify supernovae that deviate from the standard model. Steps:

- Apply an autoencoder to reconstruct light curves; high reconstruction error indicates anomalies.

- Run a t-SNE or UMAP projection of latent features to visualize subgroups.

- Cross-reference anomalous supernovae with spectroscopic follow-up to identify new classes or environmental effects.

This approach mimics the scientific method: let the data suggest new physics, then design experiments to confirm.

Step 4: Integrate Rubin Observatory Data

Once the Rubin Observatory begins operations (expected 2024-2025), data will be released in annual data releases (DR1, DR2, etc.). For dark energy studies, follow these steps:

- Access the Rubin Data Access Center (DAC) via the LSST Science Platform.

- Query the supernova alert stream using the Kafka broker to get early light curves.

- Apply your trained AI model to each candidate for real-time classification and distance estimation.

- Combine results with redshift measurements from spectroscopic surveys (e.g., DESI, the Dark Energy Spectroscopic Instrument) to build a Hubble diagram.

- Fit cosmological parameters (Ω_m, w) using a standard Markov Chain Monte Carlo (MCMC) approach, but with the AI-derived distances that include systematic error estimates.

See the official Rubin documentation for detailed API examples: rubinpython.example.com (placeholder link).

Common Mistakes

Overfitting to Simulated Data

Simulations are only approximations of reality. A model that works perfectly on synthetic data may fail on real observations due to unmodeled noise or systematic effects. Always validate with real survey data (e.g., from the Dark Energy Survey) and use robust cross-validation. Mitigation: incorporate domain randomization in simulations (varying cadence, seeing, etc.) and apply Monte Carlo dropout for uncertainty quantification.

Ignoring Selection Effects

Type 1a supernovae are not uniformly detected; brighter ones are more likely to be found at high redshift. This Malmquist bias must be modeled. AI models trained on magnitude-limited samples will inherit this bias. Solution: use a forward-modeling approach: simulate the survey selection function and include it in the likelihood.

Misinterpreting Anomalies as New Physics

Unsupervised learning can flag outliers, but many are simply data artifacts (e.g., cosmic ray hits, satellite streaks) or known rare subtypes (e.g., super-Chandrasekhar supernovae). Always perform careful vetting with human inspection and dedicated follow-up before claiming a discovery.

Neglecting Host Galaxy Information

The host galaxy's mass, stellar age, and dust content affect supernova brightness. AI models that ignore host properties may produce biased distances. Incorporate host photometry or spectroscopic features as auxiliary inputs to the neural network.

Summary

Combining AI with the Rubin Observatory's vast dataset offers a powerful path to refining Type 1a supernovae as cosmological probes and uncovering potential new physics behind dark energy. By replacing manual feature extraction with deep learning, scientists can reduce systematic uncertainties and scale to millions of events. Hunting for unknown unknowns via anomaly detection ensures that unexpected phenomena don't hide in the data. This tutorial outlined the prerequisites, step-by-step approach, and common pitfalls. The next decade will likely bring transformative insights into the nature of dark energy—thanks to these technological advances.